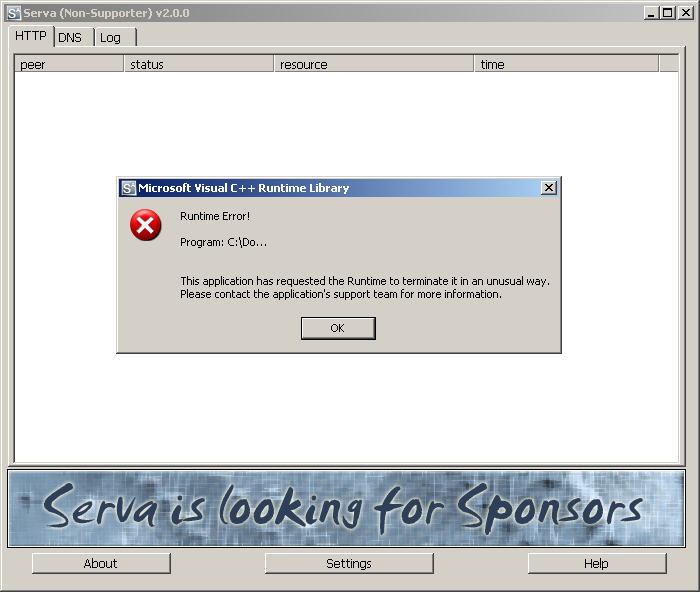

Last year while playing with the famous peach fuzzer for the first time, I discovered two Remote Denial of Service vulnerabilities in the DNS and HTTP modules of the handy all-in-one server “Serva“. The root cause for both DoS conditions are standard uncaught C++ exceptions invoked by a malformed DNS QueryName request and HTTP GET request to the appropriate service.

Debug: (b50.18c): Unknown exception - code 000006d9 (first chance) (b50.a9c): C++ EH exception - code e06d7363 (first chance) (b50.a9c): C++ EH exception - code e06d7363 (!!! second chance !!!) eax=017d6668 ebx=00000000 ecx=00000000 edx=00000003 esi=017d66f0 edi=ffffffff eip=7c812afb esp=017d6664 ebp=017d66b8 iopl=0 nv up ei pl nz na pe nc cs=001b ss=0023 ds=0023 es=0023 fs=003b gs=0000 efl=00000206 kernel32!RaiseException+0x53: 7c812afb 5e pop esi

The bugs itself are not very interesting, but the discussion evolved during the coordination process is interesting. I contacted the vendor in June 2012 for the first time and received a promising answer in a really short time period – looks like Patrick Masotta is a very ambitious guy ;-).

The discussion ended up in the following thrilling question: Dou you consider a Denial of Service vulnerability a “real vulnerability” ?

If you take the official CVSS definition of the different attack vectors, especially the description regarding the Availability Impact, it’s a vulnerability:

2.1.6 Availability Impact (A)

This metric measures the impact to availability of a successfully exploited vulnerability. Availability refers to the accessibility of information resources. Attacks that consume network bandwidth, processor cycles, or disk space all impact the availability of a system. The possible values for this metric are listed in Table 6. Increased availability impact increases the vulnerability score.

By sending a malformed request, you can easily shutdown the ressource and since the Availability Impact is a part of the CVSS – Scoring, this increases the score. It’s not a vulnerability like a Remote Code Execution, which matches more the public view of a “vulnerability”, because an attacker is able to execute something in the system context which could probably lead to a system compromise, but it could harm systems and users and finally the stressed administrator, who has to restart the server again and again ;-).

If you take the MITRE definition, it’s a vulnerability:

An uncaught exception could cause the system to be placed in a state that could lead to a crash, exposure of sensitive information or other unintended behaviors.

If you take the OWASP definition, it’s a vulnerability:

The Denial of Service (DoS) attack is focused on making unavailable a resource (site, application, server) for the purpose it was designed. There are many ways to make a service unavailable for legitimate users by manipulating network packets, programming, logical, or resources handling vulnerabilities, among others. If a service receives a very large number of requests, it may stop providing service to legitimate users. In the same way, a service may stop if a programming vulnerability is exploited, or the way the service handles resources used by it.

Sometimes the attacker can inject and execute arbitrary code while performing a DoS attack in order to access critical information or execute commands on the server. Denial-of-service attacks significantly degrade service quality experienced by legitimate users. It introduces large response delays, excessive losses, and service interruptions, resulting in direct impact on availability.

Even if those requests causing a DoS condition are not meant to be sent by a standard user (or by a default client), the root cause is still a bug – regardless wether the application is meant to be run in a dedicated environment or not. The Serva – website states:

“Network installations of Microsoft’s OSs are usually performed on non-hostile environments (or at least behind a firewall and/or NAT device).

This is good argument, but compared to other vulnerabilities out there which have been found in products never meant to be run on an Internet-facing server too, like a Windows SMB/RPC or Symantec Backup Exec, it’s still a vulnerability. It’s like closing the eyes on everything which is behind a firewall or NAT. Do you remember this security-through-obscurity thing ?! 😉

Let’s try to argument why a DoS should be rated as a vulnerability. Three scenarios:

- The administrator installed the application in a non-dedicated environment. Yes, it’s not meant be used like this, but there are always administrators who do not care about things like this. If everyone would care about such strict rules, only a small amount of vulnerabilities could be abused because everything is hidden behind a firewall and configured properly.

- It’s installed as advised on the website in a dedicated environment. If an attacker breaks into the network, using another flaw, he could use a DoS bug to take the administrators mind off the root cause – like a honey pot. While the administrator is working on fixing things on the DNS Server, the attacker starts to mount further exploits.

- If you’re using Serva as a PXE environment, someone from inside could use those bugs to harm the company because he’s probably angry with his boss or something else. So if the PXE is not available company-wide, it’s a significantly degrade in service quality experienced by legitimate users probably resulting in direct money – loss.

So wether you consider a DoS a real vulnerability or not, it always depends on the view. From a security perspective it’s indeed a vulnerability, from the coder perspective it’s more an annoying behaviour. So I can fully understand Patricks argumentation from his coding perspective that those bugs are no real vulnerabilities! It’s like argueing with your boss to get new expensive stuff to play with. This could probably lead to an endless discussion 😉

Thanks for the nice discussion – it’s always great to hear others views on things like that.

By the way, let me quote Patrick’s really funny analogy:

Bottom line: you cannot send me a report on the “security” of my BMW telling me that its engine stalls when the car is driven under the water because my BMW is not a “submarine”; it is just a car meant to be driven over the tarmac.

🙂